The group explored a “representative AI application designed to detect anomalies in real-time operational data” and considered the potential benefits and hazards of adopting this technology. Participants were international and included regulators, licensees, and technologists.

According to the report, the group recognized AI’s potential for improving safety margins, early detection of deviations, and reducing operational costs. They also highlighted the need to address challenges with AI explainability, ensure the maintenance of defense-in-depth measures, and support robust data assurance.

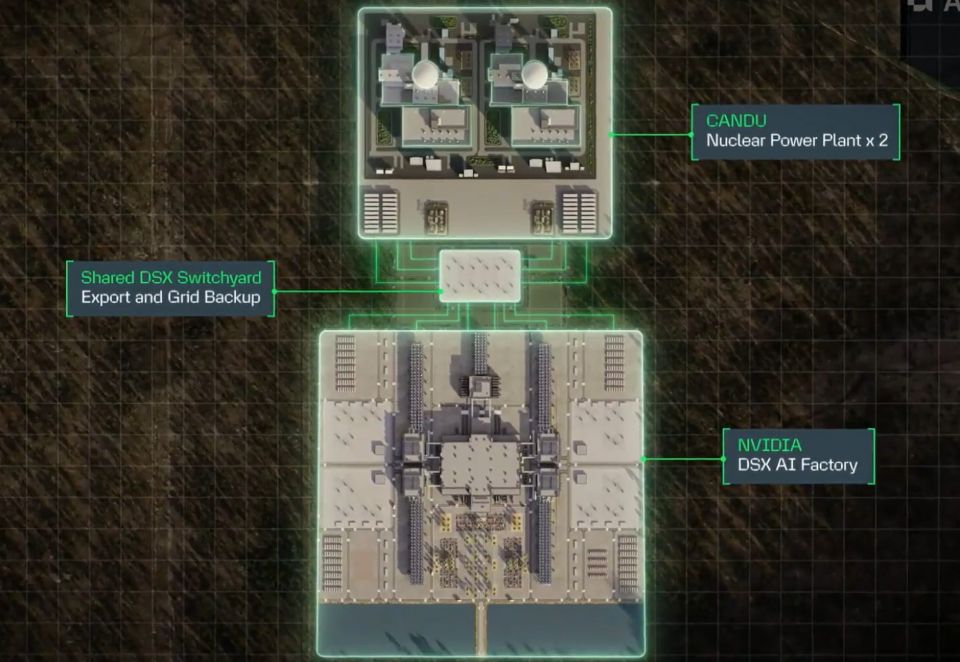

In some ways, nuclear facilities are a natural fit for adopting AI tools, as they already routinely collect large amounts of data for maintenance and operational purposes. As data are increasingly digitized, nuclear power plants become even better prepared to adopt AI tools.

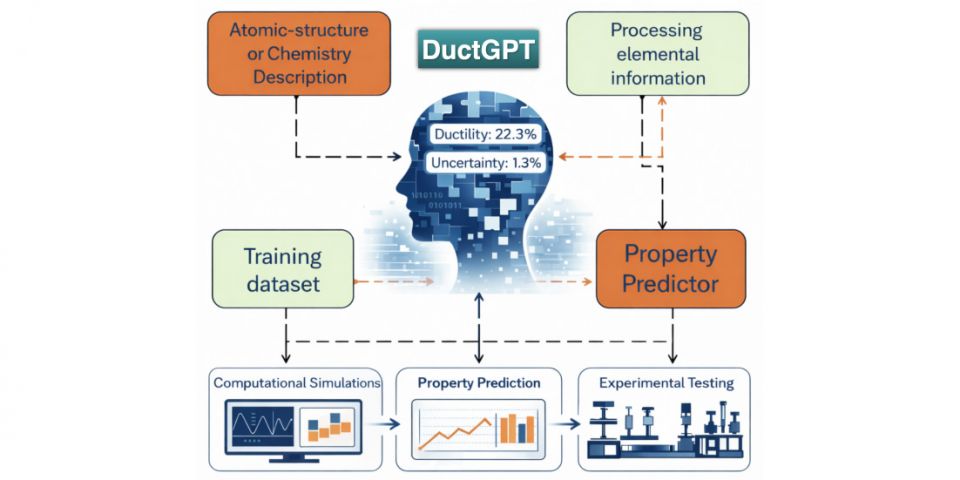

The report noted, however, that “AI is only as reliable as the information used to train it,” so industry-wide data standardization and quality assurance are key. Participants suggested independent data verification, data traceability and audit, and utilizing tight boundaries that prevent an AI application from extending beyond the conditions it was trained on.

The exercise found that adopting AI tools in nuclear power plants leads to many of the same anxieties as it does in other industries: workers fear being replaced, the “black box” nature of the tool, and concerns about human skill degradation. However, the nuclear industry must also consider how AI tools could shift approaches to regulation and how the tools fit into the industry’s rigorous safety culture.

For example, when considering what it would look like to use AI to “justify plant actions that enable operations closer to the limits and conditions of operation,” participants doubled down on the need for diverse and redundant barriers. Maximizing AI explainability was considered generally important for all use cases, but for high-stakes decisions, participants emphasized the importance of maintaining a defense-in-depth approach. This could include hybrid modeling, where AI output is corroborated by deterministic, rule-based layers or physics models or serves as a secondary analysis supplementing conventional methods.

The participants identified that the nuclear sector has extensive practice on and knowledge of human decision-making during operations, and this expertise can be used as the basis for setting expectations for AI decision-making.

Access to real-time data or plant status was considered a benefit for both operators and regulators, but this was also highlighted as a potential challenge, should it lead to a shift in legal responsibility or regulatory expectations.

The report suggested that the nuclear industry should get ahead of the ball, recommending the creation of a group that could develop technical guidance for best practices in the use of AI in nuclear applications. Among topics for consideration are expectations around AI safety envelopes, explainability, uncertainty standards, and validation protocols.

Participants also recognized the need for greater overlap in nuclear and AI expertise: AI developers must have an in-depth understanding of nuclear operations, and nuclear workers must gain expertise in any AI tools adopted.

The report concluded that participants perceived significant potential value in the use of AI for real-time monitoring in nuclear operations, but that technical justification remains an ongoing challenge and that for uses with higher safety consequences, AI explainability alone is insufficient.